FinTech Explained: Developing a modern back-end – the pros and cons of popular approaches

FinTech Explained: What is it?

FinTech is an industry oriented towards developing novel financial applications and solutions to traditional ways of dealing with finance. But building a FinTech application is not easy, and many people need to have FinTech explained to them, both when it comes to development and general use. Mooncascade’s mission for today is to do just that. This ‘FinTech Explained’ guide will go through the key principles that Fintech developers need to know in order to make their projects a success.

Before we get started with our ‘FinTech Explained’ guide in earnest however, we want to provide a little background information first. Today’s financial market is a true digital battlefield. Its landscape is vast, its opportunities are endless, and the weapons available to its warriors are varied. This battlefield is regulated by different authorities who always seem to be raising the entry barrier for newcomers.The fact that you need to react quickly to the changes and requirements they impose to stay in the game is just icing on the cake.

There are many ways of developing modern software applications. One of the biggest challenges that any development team faces is how to choose the right tools for doing so from the ocean of technologies out there.

Having built core banking solutions for Solarisbank and been at the forefront of adopting popular approaches here at Mooncascade, as part of our ‘FinTech Explained’ guide I will be shedding some light on how you can build winning fintech products, and I’ve included a list of pros and cons for each one, so you’re guaranteed to walk away from this article with some food for thought.

Keep in mind that a FinTech application always depends on the context and specific requirements it’s built for, and that there’s no such thing as a one-size-fits-all solution. Moving ahead though, let’s have a look at some key terms you’ll need to be familiar with before we really get started with our ‘FinTech Explained’ guide.

FinTech Explained: Some Key Terms

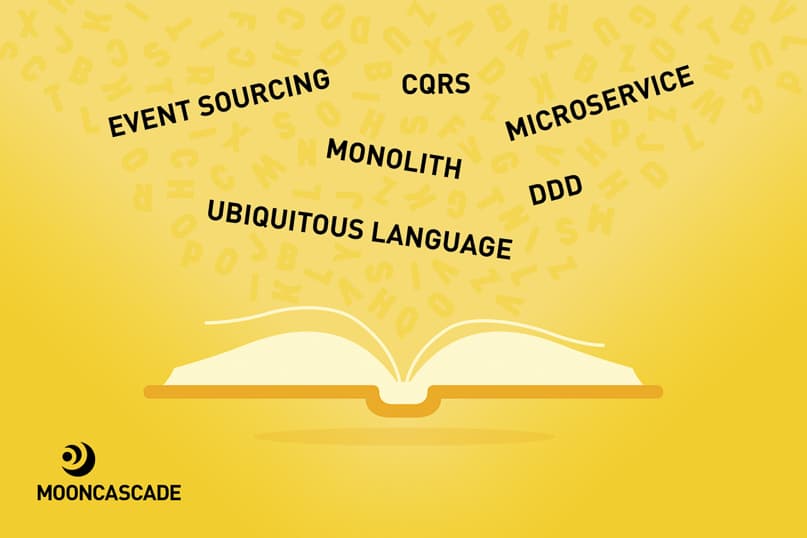

- Monolith: usually a single-tiered, self-contained application that consists of multiple components / modules (UI, data access, domain logic) which allows for completing a set of business functions.

- Microservice: a self-contained component of business functionality centered around one particular task.

- Event Sourcing: a design pattern that allows for storing an application state as a sequence of events. In other words, not only does event sourcing enable you to get the final state (or state for some period) of the application, it also allows you to see the entire history of how the application has reached said state.

- CQRS or Command Query Responsibility Segregation: a design pattern that stands for what it describes—separating the Command model (write/update state) and Read model (query, reports, read state) in your application. These models are usually meant to be different object models or services, typically running on different hardware.

- DDD or Domain Driven Design: an approach to software development for building a rich model that reflects and follows business rules and implements business processes. It’s a very good tool for organizing lots of messy logic in complex domains.

- Ubiquitous Language: one of the most important parts of DDD, in my opinion. This part forces all parties in product development (domain experts, developers, managers, etc.) to determine and follow the rigorous language of the domain model that is reflected in the names of processes, entities, actions, events, and so on.

“Are you a Monolith or a Microservice?”

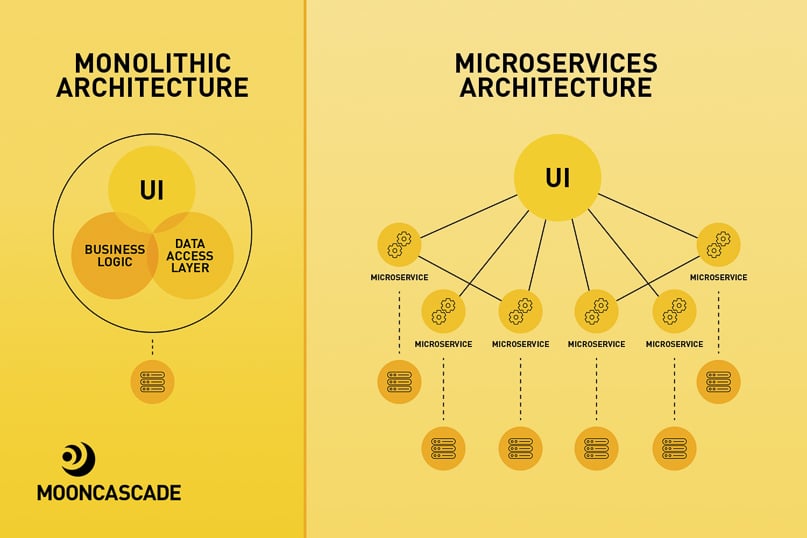

FinTech Explained: Monolithic Architecture

Despite the fact that many people have strong opinions about monolithic architecture — especially considering the recent boom in microservice-related topics—it’s usually the best way to start developing applications. Many successful services have started with monolithic architecture, including Paypal, Amazon.com, and Netflix.

FinTech Explained: The Pros of Monolithic Architecture

Monolithic Architecture is a perfect fit when you don’t know the clear boundaries of your system’s components or how they interact with each other; it reduces operational overhead (sometimes significantly); it simplifies developing, testing, deployment, and the implementation of cross-cutting concerns (logging, monitoring, etc.).

FinTech Explained: The Cons of Monolithic Architecture

The cons of monolithic architecture are centered around the following areas:

- Scalability

- Performance

- Development speed

- Update speed

- Reliability (code in one place breaks the whole app)

- Potential size (number of files and amount of knowledge)

- Difficulties in migrating to newer technologies

- This approach breaks down modularity

You’d be right to think that there are quite a few more cons than pros here. But with FinTech applications, it’s often important to get up-and-running as soon as possible, to “prove your concept” and get your first round of feedback from your client. Moreover, your initial requirements, goals, or even vision might be adjusted or shifted elsewhere as development progresses. To learn more about why a monolith can be helpful in this context, I’d recommend reading Martin Fowler’s article “MonolithFirst”.

FinTech Explained: Microservice Architecture

Microservices are still quite a hot topic nowadays, and there are lots of resources out there for using them. This is a more advanced architecture than the monolith, and adopting it typically requires an experienced team along with careful planning. However, the next step in our FinTech Explained guide is to point out that once a monolith reaches a certain size, it’s time to divide it into specific services. Just as we did for monolithic architecture, below you’ll find the pros and cons of using microservice architecture (especially compared to a monolithic approach):

FinTech Explained: The Pros of Microservice Architecture

- Independent scalability of services

- Continuous delivery and deployment

- High system reliability

- Frequent updates and faster development

- Improved maintainability and testability

- Low cognitive load per service

- Capability of having autonomous teams that own one or more services

- Easy to onboard and test new technologies

FinTech Explained: The Cons of Microservice Architecture

This approach entails high operational complexity—you have to deal with things like inter-service and inter-team communication, partial failure, cross-cutting concerns (logging, monitoring), configurations, requests that span across multiple services; harder testing of interactions between services; hidden coupling; and data consistency.

Another implicit problem with this approach is that startups tend to take it by default, aiming to solve problems that might not even exist in their use case. This in turn slows down development and could possibly damage a product or even a business down the line.

For example one of our clients – Solarisbank – started their digital banking service with a monolithic architecture, then turned to microservices once they grew big enough.

Nevertheless, this approach provides a great opportunity for your product and business to scale, grow, and evolve over time. Plus, it enables implementing distributed event-driven architecture, and helps decouple your services even more.

FinTech Explained: Use of Ubiquitous Language

Domain Driven Design (DDD) is a popular approach to building software applications. I won’t take a deep dive into this as part of this ‘FinTech Explained’ guide since it’s a broad, complex topic. Plus, it’s just as popular as microservices, so it would be easy to go wild here and thereby make our ‘FinTech Explained’ guide much longer than it needed to be.

The main concept here — domain — is what defines your business. For example, Solarisbank’s domain is digital banking; Wise’s domain is money transfers; but Amazon has multiple domains such as e-commerce, cloud web-services, video streaming, etc.

With DDD, you’ll use a set of the main building blocks from Tactical Design (Entities, Value Objects, Service Objects, Aggregates, etc.) and Strategic Design (Bounded Context, Context Maps, Ubiquitous Language).

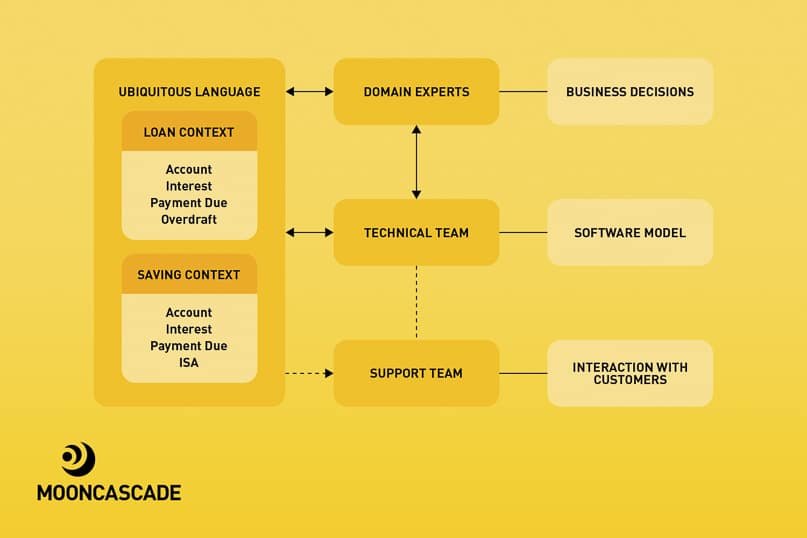

One thing I’d like to elaborate on here is Ubiquitous Language (UL) and its importance. UL is a set of terms and concepts in the business domain relevant for a specific Bounded Context and developed with the agreement of all parties — the domain experts, the technical team, and others. It helps eliminate ambiguity, understand the business, and speak using the same “language”.

Ubiquitous Language must be expressed in the software model you’ll be using, and evolve alongside the business and the model it’s applied to. The FinTech industry is really broad, so eliminating ambiguities at this stage will really help your team avoid getting lost as things move forward.

Having said all that, this ‘FinTech Explained’ guide wouldn’t be complete unless I went on to give you an example, so let’s do that with two (Bounded) contexts: Loans and Savings. The Loans context contains terms like Account (customer account), Interest (loan interest), Payment Due (loan payment date), and Overdraft. The Savings Context contains terms like Account (savings account), Interest (interest on savings), Payment Due (payment of the interest), and ISA.

Using similar terms without context and a shared understanding between teams can bring uncertainty to business decisions, break software, and affect the end user. In addition, the amount of time wasted on clarifications and “mental” mapping will only increase over time. By applying and enforcing UL within an organization, teams from different departments will be able to understand one another and tackle obstacles much more quickly. For instance, the support team might directly tell technical team members that customers complain about incorrect Interest calculation on their savings accounts (i.e., related to a Savings Context).

FinTech Explained: Event Sourcing

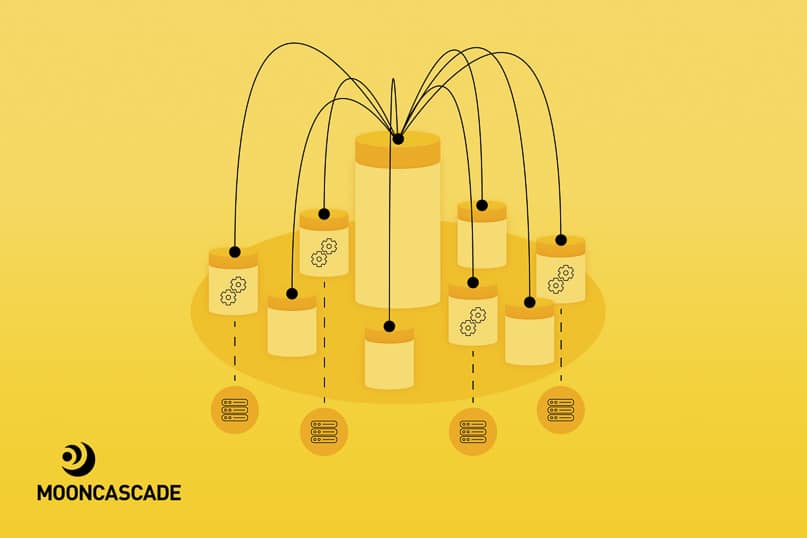

The traditional way of making an application state persistent is to save it into storage. This means that you’ll always see the application’s final state. It’s a common way of dealing with data, especially with a CRUD monolith. As business grows and the team decides to split a monolith into different (micro-) services, questions about how to decouple these services and deal with distributed data (in case of using multiple databases or database per service) will start to pop up.

Furthermore, if a company wants to get a banking license or if it performs financial operations, it must conform to the regulatory and compliance requirements of the specific country or region in which it is operating. For example, the German Federal Financial Supervisory Authority requires an audit log of all operations taking place with customers’ accounts.

One of the ways to do this is Event Sourcing, and so, Event Sourcing is the next stop in our ‘FinTech Explained’ guide. It’s become popular due to increased interest in DDD and microservices. The essence of this design pattern is that changes to application states are stored as immutable sequences of events. Basically, it saves the history that leads to said state. By replaying these events, the state can be reconstructed completely or for an arbitrary period of time. Pro tip: use state snapshots to improve performance.

Usually, events represent domain-related facts that have occurred within a system. It might be a good idea to do Event Storming sessions in advance to identify these kinds of events. DDD also comes to the rescue here with the idea of Domain Events.

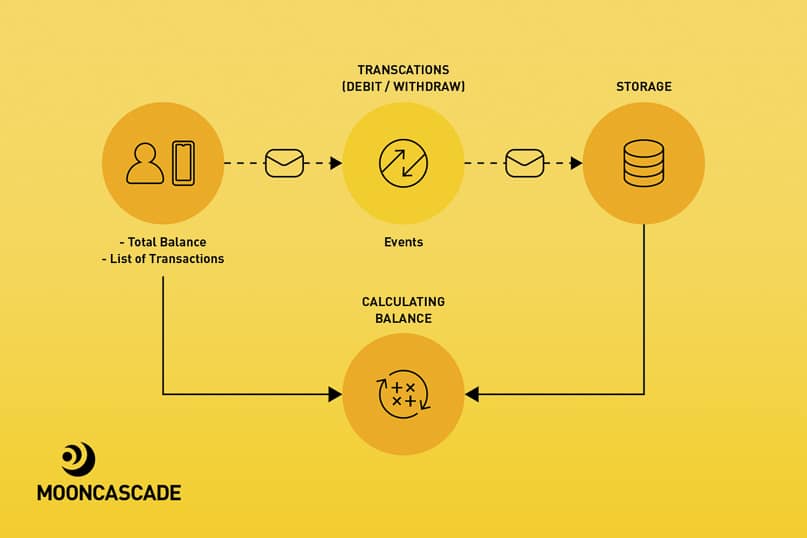

Take your bank account, for example. The bank doesn’t save your current balance – it stores deposits and withdrawals that happen over time. For example:

- DepositMoney(100);

- DepositMoney(50);

- WithdrawMoneyFromATM(25);

- TransferMoney(100).

Your balance then gets calculated from this data. You can even request to see a list or total sum of all transactions during the last month.

Events are typically stored in an ‘Event Store’. It could be MongoDB, MySQL, Apache Kafka, etc. You can have different event stores for different subdomains (or Bounded Contexts)—for example, one event store for Account & KYC and another one for Payments.

FinTech Explained: The Benefits of Event Sourcing

- All of the history relevant to your business is preserved

- Gives you the ability to determine the state of your system at any point in time

- Provides an audit log out-of-the-box that’s a natural fit for the FinTech industry’s regulations and compliance requirements

- Helps avoid the problem of storing and publishing an event in event-driven systems due to inherited atomicity

- Highly scalable reads (stay tuned)

- Single source of truth

- Enables learning from the past, which is really important for identifying shortcomings and opportunities in a business or product

- Any kind of reporting, data analysis/mining, etc.

- Debugging capabilities

Of course, these benefits don’t come without a cost. This style of building applications is different and usually unfamiliar to developers, so it implies a learning curve. See the list below for some food for thought regarding Event Sourcing.

FinTech Explained: Things to consider before proceeding with Event Sourcing

- Event versioning and backward compatibility; in short, events must always be backward-compatible

- Performance: in order to perform an action using the latest state, you need to replicate all of an entity’s events, though you can tackle it using snapshots

- Duplication of events in distributed systems: this can be especially important for FinTech apps, as you don’t want your customers having their accounts debited twice

- A decision to follow the Event Sourcing pattern should be made before or at early stages of the development process, because refactoring can be very tough

- Eventual consistency: the thing you’ll most likely reach with an ES pattern, because processing, reading, and reacting to events takes time

Obviously, Event Sourcing is not a good fit for every application and can be overkill. But if you need traceable changes, audit logs, the ability to easily extend a system, different forms of reporting and analysis, and modelling of alternatives, then this is a solid solution. Plus, you don’t even have to implement ES for the whole system; it can be done for only some of its components.

FinTech Explained: Command & Conquer Query

Developers generally use the same models to read and update information when building CRUD applications. This simplifies and unifies how they work with data. Also, it’s easier to understand a mental model of creating, reading, updating, and deleting a record. That is, until your system and its requirements become more sophisticated.

You may need to make up virtual fields, like the number of days left to pay for a credit card, required for mobile app UIs only. It could be a list of transactions for a specific date, noting the remaining amount of money after each transaction. Alternatively you might want to update your information system based on specific business rules and data that allow only specific data within the model, or store data that is completely different from the data you provide the system with.

This is where CQRS (and the next part of our ‘FinTech Explained’ guide) comes to the rescue. It’s a pattern that was initially described and popularized by Greg Young. CQRS stands for Command Query Responsibility Segregation. It’s related to the idea of Command Query Separation, or CQS.

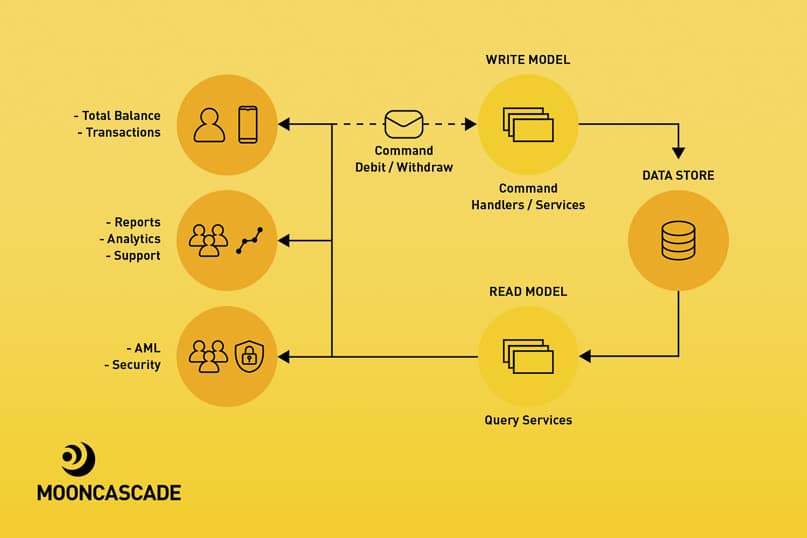

So, what is CQRS? It’s a design pattern where the model that’s used to read information (Query) differs from the model that updates it (Command). The main purpose of the pattern is to decrease the complexity of dealing with data in sophisticated, distributed, and/or event-driven systems.

Imagine an investment application where a customer can buy some form of assets. An asset’s read model might contain a ticker name, company info, historical price data, current price, balance sheet, different ratios, with some of them being optional. In parallel, when the customer buys the asset, the write model might also require data like the customer’s account number and available balance, the asset ticker and current price, number of shares to acquire.

Read and write models can share the same database, in which case the database acts as the communication layer between the models. Another popular approach is to have separate databases for the models—a master database and read replicas—in which case there should be some communication mechanism between them, such as messaging/events.

FinTech Explained: The benefits of CQRS

- Helps deal with complex systems

- Separation of concerns with simpler read/write models

- Independent scaling of read and write models (services)

- Shapes data for different purposes (especially for read models)

- Naturally fits event-driven architectures (typically with Event Sourcing)

FinTech Explained: The drawbacks of CQRS

- Added complexity might reduce productivity and increase risks

- Operational and maintenance overhead

- Potential code duplication

- Eventually consistent views, though this might be fine for some domains

FinTech Explained: Friendship of CQRS and Event Sourcing

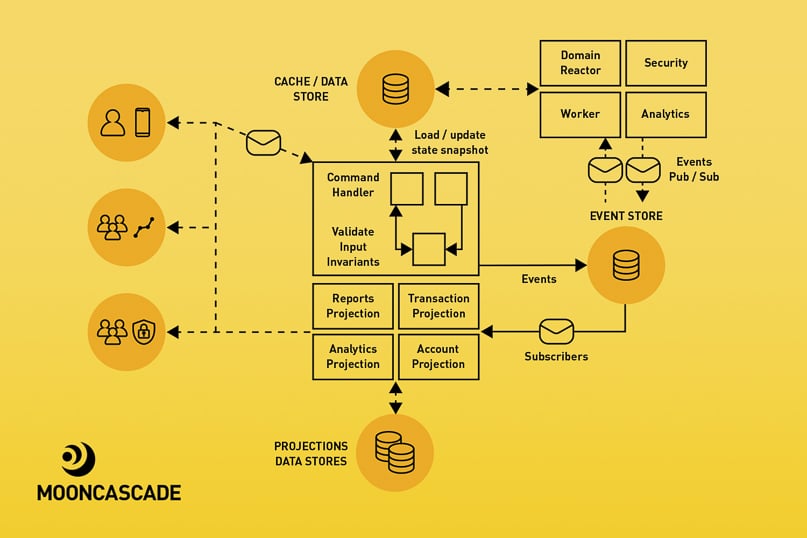

The way that CQRS and Event Sourcing work means that the two can function together hand-in-hand, and the next part of our ‘FinTech Explained’ guide will explain that relationship. As noted, CQRS fits event-driven architectures, and Event Sourcing in particular, very well. The reason for this is that CQRS helps resolve problems that come with ES, like performance, events processing, and complexity.

In general, the read model consists of (query) services that expose APIs to get the info and projectors that consume events and update databases (project the state of events). As an event store in ES is a source of truth, it allows us to replicate events and generate different views for different purposes. Also, you can easily remove and replace query services if requirements get changed and new query services get shipped.

The write model typically consists of command services and reactors. The command services accept and validate requests/commands, build an Aggregate (reference to DDD), check invariants, and then generate and store events in the event store. The reactors basically do the same, apart from being triggered by an event other than command, i.e., they react to events and might produce new events. Commands can be processed immediately or can be placed into a queue, i.e., they can be processed synchronously or asynchronously.

Before we end this part of our ‘FinTech Explained’ guide, we want to say a few words about aggregates, with reference to Martin Fowler’s description: an aggregate is a collection of entities and value objects that can be treated as a single unit. It has one main object from the collection as an aggregate root. All of the external interactions with an aggregate from outside pass through the aggregate root. The purpose of the root is to keep data integrity, consistency, and check business invariants. Transactions shouldn’t cross the aggregate’s boundaries. Aggregates are stored to and loaded from storage as a whole. For example, a bank might have the following requirements in order to open an account: your age should be 18+ and you should be a resident of the UK. These are clear business invariants that must be enforced by the account aggregate.

FinTech Explained: Some questions to consider when using the combined CQRS + ES approach:

- How to deal with duplicated events if your message broker distributes them multiple times

- How to implement idempotency for commands

- Does your business domain accept eventual consistency

- How to react in case of event replication. Should your system notify third parties

- How to avoid event cycling

- How to distribute events and where to store them

- How to enforce the event schema

- How to version events

- And many more…

FinTech Explained: NewCommand (FinishArticle)

As we hope that this ‘FinTech Explained’ guide has shown you, nowadays building software requires you to make a host of business-related, operational, and technical decisions. If you ask an experienced developer how you should make these decisions, the most common answer you’ll hear is: “it depends”. Your decisions should always depend on context, requirements, capabilities, expertise, experience, and money – while starting simply, improving iteratively, seeking feedback quickly, and accepting trade-offs within the technologies you use will all help your product grow to impressive heights.

Building a FinTech product yourself?

As we draw our ‘FinTech Explained’ guide to a close, we would like to point out that building next-level FinTech products in an increasingly unpredictable, demanding, and regulation-heavy market is an uphill struggle. The market waits for nobody, so if you want a chance at winning, you’d better know how to play its game. You’ll want to join forces with someone who can give you an edge over the competition.

An experienced FinTech partner with a proven track record can do just that, by taking a big part of the product development load off your shoulders. They can make sure your platforms are built with cutting edge technology and the highest standards in security and usability, giving you time to focus on the rest of your business.

Here at Mooncascade, that’s exactly how we help our clients, whether they’re a startup or an established player. So to learn how we can help, or even to have the benefits of FinTech explained with reference to your own individual business circumstances, why not drop us a line today?

Useful Links & Statistics

- FinTech – Statistics & Facts for US & EU — https://www.statista.com/topics/2404/fintech/

- The FinTech Boom: Technology brings obsolete financial systems up to date — https://medium.com/@cassiopeiaservicesltd/the-fintech-boom-technology-brings-obsolete-financial-systems-up-to-date-956e4a120252

- Solarisbank Core Banking — The Beginning of a Journey — https://medium.com/solarisbank-blog/solarisbank-core-banking-beginning-of-a-journey-f0a94f45b0d5

- Monolithic Architecture — https://microservices.io/patterns/monolithic.html

- Don’t start with monolith – https://martinfowler.com/articles/dont-start-monolith.html

- Microservices Architecture — https://microservices.io/patterns/microservices.html

- Here is a list of articles published by companies about their experiences using microservices — https://microservices.io/articles/whoisusingmicroservices.html

- SOA vs. Microservices: What’s the Difference? — https://www.ibm.com/cloud/blog/soa-vs-microservices

- Event Sourcing Pattern

- CQRS Pattern