Face Recognition in Business— Possibilities

This article will give you an overview of the possibilities of face recognition and how to use them in your business.

If you’re interested in what face classification is, how it works, what are its use cases and limitations, have a look at Face Recognition in business (part 1) — Use Cases and Limitations.

Benefits of face recognition for businesses

If big corporations face issues with face recognition algorithms, does that mean there’s no point in smaller firms trying to implement them? How can a company benefit from face recognition software without the resources, and access to the kinds of data, of giants like Google and Facebook?

Luckily, Google, Amazon, Facebook, MIT, and a number of other researchers interested in machine learning regularly produce frameworks and pre-trained models for a variety of purposes and tasks. Models such as these are usually trained on open datasets, which can then be used as a groundwork for other solutions.

Take FaceNet, for example, a model built by Google researchers. It was tested on a highly popular dataset called Labeled Faces in the Wild. This FaceNet model can be used as a feature extractor — it was trained using millions of images of different faces, so it already knows which features are required for recognizing one.

A new layer can be added to the neural network to learn the differences between the faces. Specific numerical measurements called embeddings are generated for every face (128 measurements per face). When training the network the embeddings are compared. If the embeddings are of the same or similar face, then the distance between measurement vectors is smaller. If the embeddings are of one face and a totally different one, then the measurements are further apart.

With a pre-trained model, it is possible to run several pictures of a particular person through the network to calculate that person’s embeddings. Then, once a new photo of that person is taken, it is possible to calculate the distance to the pictures in the database and recognize a person when his picture is closer than a set threshold. If someone is not in a database of precalculated embeddings, the system would fail to find an embedding that is closer than the threshold and would output “unrecognized face”.

It would have low accuracy, of course, but it could easily be improved by adding images to the classes used before training. An in-depth explanation of a face recognition mechanism and the process of training your own model for one can be found in this great blog post.

Face detection and comparison

There are even more ways of using human faces in machine learning systems.

One possibility is to detect multiple faces and compare their features. As the FaceNet model was trained on a vast dataset, it does a great job of extracting a face’s features from an image. Using this model to compare faces could be applied to ID verification, for example: you would show your face and an ID card to a camera, and the algorithm would determine if the ID card actually belonged to you or not.

Another idea is to use face detection in room occupancy estimation. Using indoor surveillance cameras, it would be possible to detect and track faces within a given room and count the number of people occupying it.

It’s even possible to add face recognition to this last example. For instance, a factory could have cameras positioned so as to capture the faces of workers entering and exiting the building. Instead of using traditional badges or cards, the system could register a few images of employees from different angles and in different light conditions, and allow them to enter via face recognition software.

Of course, this isn’t a fully automated solution. Human operators would still be needed if a notification about an unrecognized person were to be triggered. Before sounding the alarm, the operator would need to confirm whether said person didn’t have permission to enter or was simply an employee who had grown a beard and made the face recognition software fail. To ensure the system wouldn’t fail too often, data (pictures of labeled faces) would have to be regularly collected to allow for the model to be periodically retrained.

Face recognition in a factory could also be used for time tracking purposes. Employees wouldn’t have to scan their cards, as they would be captured by cameras when entering or exiting the building. If cameras were already installed and time tracking were an important question, this solution could be beneficial. Personally, however, I’m not a fan of abusing privacy by stationing invasive cameras everywhere.

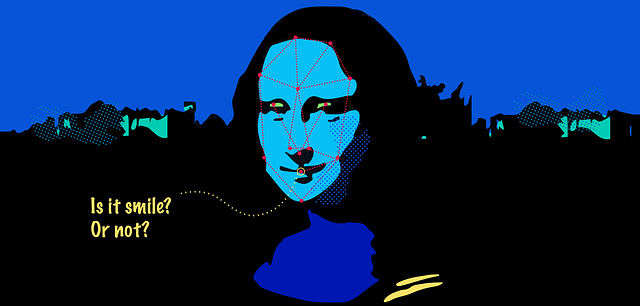

Understanding facial expressions

Let’s not forget that current algorithms also allow us to understand facial expressions. Face landmarks are used for detecting the position of a face’s eyes, nose, mouth, etc., as explained earlier. However, a second step is required for understanding facial expressions: another CNN model is needed to tell us which expression is represented by the position of each landmark. Such a model would need be trained with a large number of labeled facial expressions before being implemented.

One possible application here is authentication. Nowadays, we can access a variety of banking services from our phones. But when it comes to personal finances, security should always be top-notch. If, for example, a banking app asked you to scan your ID with a camera, then take a selfie to ensure you’re the person you say you are, would this be enough? One could have someone else’s ID and instead of taking a picture of themselves, take a picture of you (or use an image of you). An additional layer of security in this case would be to ask the person identifying themselves for a random facial expression, like sticking their tongue out. The probability of another person having a variety of pictures of you making different facial expressions is much lower.

Another example can be found in the marketing and advertising industry. Realeyes, for instance, helps its clients verify the quality of their marketing campaigns and promotional videos using face recognition. Before watching a campaign, viewers are asked to allow access to their computer’s camera. As they watch, the camera tracks their emotions and records how the video made them feel. The company can then adjust their message to evoke or influence certain emotions in their target audience.

What to keep in mind

Machine learning is a powerful tool when limited to a single clearly defined task, though each new system or application will come with its own set of challenges.

In case of face recognition systems, consider the amount of data you’re going to train your model with. The specificity of the data used will call for corresponding tools to be selected — e.g., is it possible to train the model using CPU or is a cluster of powerful GPUs required? Always take a close look at the data you’re training your model with. How does the model converge? Why doesn’t it converge? Is it overfitting or underfitting? Is there enough data to represent a class? Is the data of good quality? Has the data been properly transformed?

Face-capturing cameras can cause a number of problems. For example, low resolution cameras need to have faces positioned directly in front of them and occupying the entire frame, or they won’t be able to detect anything. Better quality cameras and better lighting conditions will be needed for detecting faces from a distance. Furthermore, tasks like recognizing and comparing.

Here’s where Mooncascade comes in

When attempting to use face detection or recognition for business purposes, try to define what you’d like to accomplish as clearly as possible beforehand. General algorithms that can be applied to millions of people in different light conditions are much harder to develop.

What will your use case actually look like? Does it have to be general? Maybe it only requires developing an algorithm that works for images in full HD, with good lighting and controlled conditions. Remember that many of the questions you’ll have to address when dealing with machine learning will be specific to each project, and require personalized solutions.

Luckily, you don’t have to struggle through these technical details on your own. At Mooncascade, our team of talented data scientists is here to help solve a wide range of machine learning and big data problems. Not only do we consult with you about various approaches and methodologies, we also have the tools to implement the solutions that will best benefit your business.

Conclusion

In this article, we explored the possibilities of face c

If you happen to be interested in using face classification in your product or